What does AI do to software?

∞ Apr 11, 2026Benedict Evans surveys what AI does to software, and it certainly doesn’t kill it. He covers the familiar shifts: faster development, new AI-powered features, and likely disruption of incumbent software solutions. But then he turns to the most interesting question: what forms will intelligent interfaces take, and how will those forms change both business and customer?

Evans considers how SaaS and mobile both changed what software (and software companies) look like:

One way to think about both of these is that the first step was to do the old thing with a new tool, but the next step was to think of things that were native to the new form. We started by making a Flickr app for mobile, and then we made Instagram, and then we got Snap and TikTok, which embraced the fact that this was a device with a camera, and a touchscreen, and its own compute instead of just a website. Some of the early thinking around AI software is to look at generative interfaces, to think about how dynamic and intelligent the screens could be, and to wonder if you might move away from carefully fixed screens and predefined workflows. No one really knows, but a lot of people will have fun experimenting. …

As I say, in almost every essay, meanwhile, this is all very 2007, or 1997, or very 1982. We know this is huge, we can sort of see some of what’s coming, but we don’t really know how any of this is going to work. And so, there is an enormous amount of white space out there, for people to go out and create things, that have nothing to do with stupid ideas like ‘software is dead’.

Generative interfaces. Dynamic and intelligent screens. A shift away from static workflows.

Amen. That white space is where a new era of intelligent interfaces lives, and it’s exactly the territory that Sentient Design maps out. If you’re one of those people who will “have fun experimenting,” Sentient Design’s framework and design patterns will give you a head start. Get after it.

AI is the Closest Thing to a Genie Lamp

∞ Apr 11, 2026“AI is the closest thing in the world to a genie lamp,” writes Alberto Romero in the Algorithmic Bridge newsletter. His point: while the “magic” might be remarkable, it shifts the effort to the wish. When AI can manifest what you ask on demand, the hard part becomes naming what you actually want. Alas, most people (and organizations) aren’t very good at that part.

The idea of thinking about “single-use” magical objects is that they invert the effort allocation 100%: the “how” is fully outsourced. How does the genie get you a billion dollars? How does it make you extremely handsome? You don’t care, you don’t want to know. So, you automatically realize that your mental effort must now be fully devoted to the complementary question: what do you want? …

The belief that doing takes more resources than deciding what to do has been the default operating mode for basically all of human life. The how has always been so expensive that the what barely matters. You didn’t need to be good at wishing because you were never going to get most of what you wished for anyway.

So now you can get what you wished for (in software and software-shaped problems, at least). As that capability becomes more equally absorbed, the differentiator won’t be speed or execution but knowing what to make in the first place. Romero points out that sometimes people call this critical POV by a number of different names: taste, judgment, decision-making, agency, curiosity, or imagination. “For a while, I thought these were all the same thing in various disguises,” he writes. “But I think they’re actually different skills that all became load-bearing at once.”

All these skills share a family resemblance—they’re all “what” skills rather than “how” skills—but they’re not interchangeable. Someone can have extraordinary taste and zero agency (the critic who never creates). Someone can have strong agency and terrible judgment (the founder who moves fast toward the wrong thing). Someone can have all the curiosity in the world and zero agency (the vibe-coder who is handling 10 projects at once but none of them will have any impact in the world). Etc.

All these skills are also well-known to those who have dedicated time to thinking about these matters, but for the rest of us, they were all invisible before because the “how” bottleneck was sitting in front of them like a boulder blocking a cave entrance. Now the boulder is rolling away and it turns out there’s an entire stack of capacities behind it that most people never developed because they never had to.

When you suddenly have the ability to do anything—and fast—then the critical skill becomes knowing what to build. And those people Romero mentions, the ones with “dedicated time to thinking about these matters”? Those people are called designers. The best designers have the skill to learn the outcomes that people truly need… and from there, work through the frictions blocking those outcomes to finally understand the deep, twisty heart of the problem. Naming the outcome and the problem is how you begin to work toward a solution—the what, not the how.

AI is just a technology. It’s a strange and powerful one, but in the end it’s an implementation detail (so is the genie and its lamp). We’ve got a new and burgeoning ability to make our ideas real, and a whole set of newly possible shapes those ideas can take. What shall we do with that? The genie can grant the wish, but it can’t tell you what to wish for. That’s always been the designer’s job.

An Anti-AI Moral Stance?

∞ Apr 11, 2026AI is powerful and strange, but the technology itself is also deeply fraught. I’m generally optimistic about what AI will unlock, but let’s not be naive: AI threatens steep environmental, social, and creative costs. It raises urgent questions about copyright, labor, and what it means to make things. The cynical deployment of AI wrings out efficiencies at human expense. The creators of the leading AI models have engaged in the purest form of extractive capitalism to date, slurping up all the world’s content without compensating its creators. So yeah, that’s a lot.

Mainstream surveys from Pew, Quinnipiac, Gallup, and others show a general unease about trust and consequences with AI, even as usage increases. (A 2025 Gallup study found that 64% of Americans plan to “resist using AI as long as possible.” And indeed, a more recent Pew study finds that 65% don’t use AI much at work.)

But what if your feeling is stronger than unease? What if you find the whole enterprise so immoral that you can find no ethical use for AI?

“Mike in Massachusetts” asked that very question in the Work Friend advice column of the New York Times. The columnist’s pragmatic answer echoes how you might think about living within any system whose foundations or effects you can’t abide (late-stage capitalism, fossil fuels, fast fashion, industrial food,etc.):

What’s your goal in refusing to use A.I.? Saving your immortal soul? Throwing a small wrench in the enormous cogs of capital? Will refusing its use do anything to stop its relentless advancement, or just make you feel righteous?

A.I. boosters and critics alike often talk about the technology in revolutionary — if not apocalyptic — terms, which can make the risks of using A.I. (or not) feel overwhelming. To use A.I. feels like participating in morally bankrupt process of technological exploitation, on the one hand; to refuse it feels like consigning yourself to obsolescence and unemployment.

But the stakes are just not that high. If you decline to use A.I., you may end up working a bit harder than your peers, but the process of adopting A.I. will occur over a long timeline, in stops and starts, and the risk of being left fatally and irreversibly behind is low. At the same time, your individual decision to use it won’t make a huge difference to the tech companies you’re wary of on their march to economic domination.

Which is why my recommendation is to refuse the choice you’re offering yourself. Why not use A.I. in circumscribed and deliberate ways to make your work life better? You won’t be refused entry to heaven because you prompted Claude to organize a data set and saved yourself some time and eye strain.

Meanwhile, you could channel your outrage into organized political action rather than an individual ethical choice by joining and supporting climate or labor advocacy groups that are thoughtfully working on the issue. If you’re morally against A.I., you owe yourself and the people in your community more than just a private protest.

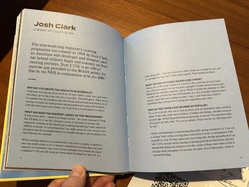

This Is Running

∞ Apr 11, 2026

If you’re a runner (or just running-curious), you’ll love the beautiful, thoughtful new book This Is Running by Raziq Rauf, author of the wonderful Running Sucks newsletter.

Raz was kind enough to send me a copy and even kinder to include me in the book (an interview about the origin and impact of Couch to 5K). But that’s not why I’m recommending it.

This Is Running is both a wonderful artifact of cultural research—UX researchers take note!—and a love letter to a world that Raz clearly adores, like so many of us. It’s an expansive exploration of why we run: What on earth motivates anyone not only to do this hard thing but to fall in love with it?

The book applies many lenses to that question: community, consumer culture, technology, identity, history, and place. Raz takes you from childhood playgrounds to high-mountain trails to everyday run clubs to the inside of tech labs and apparel companies. It goes deep into all the rituals and emotions that runners invest in the sport, individually and with others. It feels like every kind of runner is represented here.

It’s also a gorgeous book, well-designed and full of striking photography. It’s one of those rare books that is BOTH a cover-to-cover bedside read and a pride-of-place object on the coffee table.

I’ve already read the thing twice. I love it. Highly recommend.

When Using AI Leads to ‘Brain Fry’

∞ Apr 7, 2026A BCG study of US-based workers found that intensive oversight of AI agents can cause cognitive exhaustion that the researchers call “AI brain fry.” The researchers shared their results in Harvard Business Review:

Participants described a “buzzing” feeling or a mental fog with difficulty focusing, slower decision-making, and headaches. This AI-associated mental strain carries significant costs in the form of increased employee errors, decision fatigue, and intention to quit. … Many participants used the words “fog” or “buzzing.” They described intensive back-and-forth with the tools, followed by an inability to think clearly, like a mental hangover, comprised of difficulty focusing, slower decision-making, and headaches, requiring several to physically step away from their computer to “reset.”

The researchers found that brain fry is linked closely to the amount of oversight and direct monitoring the agents required: a high rather than low degree of oversight caused 14% more mental effort on the job, predicted 12% more mental fatigue, and predicted 19% greater information overload.

So even as productivity increases with agents churning out work, the personal cost of monitoring that work is high. The study found increased fatigue when the use of AI increases workload—producing more simply costs more—and also when users multitask across tools. “As employees go from using one AI tool to two simultaneously, they experience a significant increase in productivity. As they incorporate a third tool, productivity again increases, but at a lower rate. After three tools, though, productivity scores dipped.”

A senior engineering manager in the study described the effect this way: “It was like I had a dozen browser tabs open in my head, all fighting for attention. I caught myself rereading the same stuff, second-guessing way more than usual, and getting weirdly impatient. My thinking wasn’t broken, just noisy—like mental static. What finally snapped me out of it was realizing I was working harder to manage the tools than to actually solve the problem.”

But the kind of task matters. When AI was used to replace routine or repetitive tasks—what the researchers call toil—burnout scores were 15% lower than for those who didn’t use AI that way . The researchers were careful to delineate the difference between burnout (emotional fatigue) and brain fry (mental fatigue). Using AI for unpleasant tasks still caused mental fatigue, but improved emotional state: higher work engagement, more positive associations with AI, and more social connection with colleagues.

As a designer of intelligent interfaces, my takeaway is that people need help managing the overload of extremely productive but not entirely reliable agents. This help might be delivered through a mix of improved experience, tools, and processes that make oversight less effortful—or alternatively that help people regulate and reduce how much they engage in the first place.

“Just as we have norms for spans of control for managing humans, so, too, limits need to be defined for human + agent oversight and for agents alone,” the researchers wrote. “Tools that require less intense attention or working memory, which instead support creative mind wandering, foster social engagement, or scaffold skill development can produce even more business value but sustainably, while encouraging innovation, fostering growth, and sparking joy for users.”

That sounds like a design challenge.

How Many Products Does Microsoft Have Named ‘Copilot’?

∞ Apr 6, 2026Names matter. That’s especially true when we’re all trying to establish shared meaning in something new.

Alas, at Microsoft, the name “Copilot” means very little except for a hand-wavey gesture in the direction of “has AI.” Tey Bannerman is doing the heroic work of tracking down the number of products and features named Copilot, and he’s up to 80 and counting.

“There are now Copilots inside Copilots, Copilots for other Copilots, and a physical Copilot key on your keyboard for summoning them,” Bannerman writes. Microsoft applies the label to apps, features, platforms, a keyboard key, and an entire category of laptops. When everything is Copilot, nothing is Copilot.

This is a marketing problem for Microsoft, but it also points to a general fuzziness problem in the industry. The meanings of terms like “agent,” “copilot,” even “AI” itself have grown so diffuse as to be useless for common understanding even within discrete teams or organizations. (Somehow, even traditional automation is called agentic lately, but that’s a post for another time.)

One of the goals that Veronika and I had for writing the Sentient Design book was to create crisp vocabulary and definitions for different experience types. For what it’s worth, “copilot” is one of the four fundamental “postures” in Sentient Design from which all intelligent interfaces derive. Our definition:

Copilots provide continuous, context-aware assistance throughout an activity.

This always-on stance of constant monitoring and assistance is different from the other postures of tool, chat, and agent. Posture determines the system’s manner and relationship with the user. More than just differences in functionality, these postures describe the different ways users collaborate with intelligent interfaces:

- People use tools.

- People talk to chat.

- People delegate to agents.

- People are backed by copilots.

From those four postures, over a dozen novel experience patterns emerge. Sentient Design describes them all, along with the emerging UI and interaction patterns to make them useful.

When you’re making something new, apply some rigor to what you call it. A crisp definition not only helps you describe the thing, it helps you shape what you make—and make it distinct from its neighbors. It tells you (and your customers) not only what it is, but what it’s not.

Claude Dispatch and the Power of Interfaces

∞ Apr 1, 2026Ethan Mollick reviewed the research that chat interfaces introduce heavy effort that undermines complex, specialized work. His conclusion is that the future of AI-powered interfaces is specialized interfaces built on the fly in response to user intent and context:

Instead of having companies build a specialized interface for every kind of work, the AI generates the right interface on the fly. I suspect the future isn’t one interface to rule them all. It’s AI that generates the right interface for the moment, an agent on your desktop, a chart in a conversation, a custom app to solve a problem. We’re moving from adapting to the AI’s interface to the AI adapting its interface to you.

AI capability has been running ahead of AI accessibility. The models have been smart enough to do extraordinary things for a while now, but we’ve been making people access that intelligence through chatbots. And, as that cognitive load research shows, the chatbot format is actively working against them. As interfaces improve, we’re going to see what happens when a much larger number of people can actually use what AI is capable of. Every new interface that closes even part of that gap will feel like a leap in AI capability, even when the models haven’t changed (though they are still changing). My guess is that a lot of the “AI disappointment” people sometimes express comes not from the AI being bad, but from the interfaces being wrong. We built one of the most powerful technologies in recent history and then made people access it by typing into a chat window. That will change soon.

Friends, this is what Sentient Design is all about, and Veronika Kindred and I wrote an entire book about it, now available for pre-order.

(This isn’t the end of interface design, by the way, far from it. It’s an entirely new era of design. It’s super exciting and twisty and fun, and you’re needed more than ever to help make it work.)

“I Suppose You Would Call It an Interface for the User”

∞ Mar 14, 2026I have enough skills in my Claude setup that I need something to help me remember them. Maybe we could call it a menu or something . And it would group things in a way that matched my mental model and helped me find things. I suppose you would call it an interface for the user.

Why AI agents need to learn to read the room

∞ Mar 14, 2026Researcher Genna Bridgeman shared practical findings about how AI interactions are affected by social expectations of the specific communication channel.

Bridgeman is a product researcher for Intercom, the company behind the Fin customer service agent. Fin is remarkably effective at managing routine support tasks, and it does it in live phone conversations, chat, email, and WhatsApp.

Each of those channels has its own etiquette, of course. The ways—and even the reasons—people use those channels create expectations for how info will be delivered. Bridgeman’s research found that when AI didn’t get the etiquette right, the result undermined trust as much as any human faux-pas might:

When interactions felt wrong, users didn’t blame the answer. They questioned the system’s understanding. And once that doubt set in, every subsequent response was judged more harshly.

The core takeaways:

- In chat: Brevity, clarity, and structure are more important than completeness.

- In email: The absence of a formal greeting and a thorough (even dense) answer can seem dismissive or incomplete.

- On the phone: If the agent talks like a bot, users will start talking like a bot by simplifying language and avoiding nuance, which makes the system less effective.

- In WhatsApp: Users expect speed and continuity more than traditional chat, with little patience for re-establishing context even in new sessions.

SaaS Is Dead?

∞ Mar 14, 2026In his newsletter, Benedict Evans deflates the frothy talk that AI agents and assistants will eliminate vast swaths of software. That theory says that people will just tell the computer what they want; if anyone can use AI to spin up their own tool to do the job, then who needs ready-made software? (The theory is especially popular among engineers who already make their own tools.)

When you actually go and look at successful software, the users generally didn’t see the problem, didn’t see how you would solve it, and could not have sat down and thought about what should happen on every screen, how it should get built, and how you get everybody to use it. There is an enormous difference between knowing something about how your company and how your job works and being able to identify a set of problems and a set of workflows and think about how those could be automated.

In other words, the fact that you’re writing the code in natural language doesn’t mean that you don’t have to work out what the computer should do.

As AI’s capabilities grow, figuring out where to aim those superpowers becomes especially important.

Understanding the problem, imagining a fresh solution, and crafting the ideal experience… all of that is really hard to do when you’re burdened by the assumptions and expectations of how you’ve always done it. This might be non-intuitive, but the burden of experience means that the people in the trenches are often the wrong people to design the new solution. DIY tools will only take them so far.

Software design is harder than it looks. So is process design. The new era of intelligent interfaces doesn’t mean that we just toss users into the deep end and hope for the best.

Software and user experience are changing, but they’re not going away. Domain- and context-specific solutions will continue to be critical in order to give people the context and platform to do their work, especially inside complex organizations and processes. The future is much more likely to be AI embedded inside a million bespoke workflows, not a million bespoke workflows jammed into a single AI interface.

For product leaders and designers, that’s a big opportunity. What dramatically new tools and exceptional experiences can we create for our users?

The Shape of the Thing

∞ Mar 14, 2026In his newsletter, Ethan Mollick takes stock of the past few months of dramatic, exponential improvement in AI agents. Their sudden improvement in delivering actually “reasonable and useful” results, he writes, is beginning to unlock radical changes in the shape of work (particularly in the software industry). But what shape will that be?

At the frontier, a small tier of software shops is allowing AI agents to build the software themselves—no human coding, no human review. That’s a lot more profound than the simple automation of tasks or process; that changes the whole business model. That those experiments are possible to run at all is remarkable. Where will they land, and what does that mean for other industries? “AI is good enough to change how organizations operate,” Mollick writes, “and the experimentation is just getting started, even as models continue to improve.”

What will be the Thing that AI becomes? We still don’t know, but this feels like a foundational moment to shape that outcome. Right now is when the assumptions and applications of AI are beginning to firm, not just the underlying technology:

When a technology is this powerful and this unsettled, the choices that individuals and organizations make right now matter more. We can see the shape of the Thing now, but we can still influence the Thing itself, and what it means for all of us. We clearly don’t have rules or role models for how AI gets used at work, in schools, or in government. That’s a problem, but it also means that every organization figuring out a good way to use AI right now is setting a precedent for everyone else. The window to shape the Thing may not last long, but it is here now.

You have a role. Your organization has a role. This is not a time to be passive.

Charlie's Fake Videos for AI Literacy

∞ Oct 9, 2025Charlie is a banking app for older adults, with a brand focused on financial safety, simplicity, and trust. They launched a fun and smart campaign to help educate about the risks of deepfake scams.

The system creates AI-generated videos for friends and family—customized with their first names and hometown—to deliver a message about AI fraud, all while escaped zoo animals run amok. It’s silly and entirely effective.

Most people don’t realize just how good AI video has become—and how easy it is to clone anyone’s voice or face now. Raising that awareness feels essential, especially for an older audience frequently targeted by scams.

For all of us working with AI, we have a responsibility to improve literacy and cultivate pragmatic skepticism among our customers and users. The work of this new era of design is to be clear about AI’s risks and weaknesses, even as we harness its capabilities.

Encouraging appropriate skepticism is part of the work.